Swampbots and Demonbots

Is Claude a swampman? Is ChatGPT demonic?

Let me outline two ways of thinking about the output of large language models. These LLMs can be characterised as Swampbots or Demonbots: meaningless or treated as if meaningful.

Swampbots

The philosopher Donald Davidson is out walking during a thunderstorm when lightning strikes an adjacent tree stump, reducing him to a pair of smoking boots. The stump, quite coincidentally, is rearranged into a replica of the man. This Swampman goes on to take over Davidson’s old life, his friends being none the wiser.

This replica, so Donald Davidson’s argument goes, “can't recognize anything, since it never cognized anything in the first place”.1 It can produce sounds that seem to have a meaning, but it can’t mean anything by them. When Swampman says he loves his wife, according to Davidson’s account of meaning, this would be senseless as the Swampman never had a wife.

This thought experiment has been much argued over, not least of all because the premise itself is so unnatural. Finally, with LLM chatbots we have something approaching a Swampman.

When you type commands for ChatGPT, Claude, Grok and other LLMs, the response can appear meaningful, as if you were having a conversation with a human. The output can appear to make claims about its mental states, feelings, attitudes. But perhaps these are Swampbots: the original meaningful utterances in the datasets have been obliterated by the lightning of machine learning and reconstituted. When a Swampbot replies “that’s a good question!” to your inane query, it might appear to be a sycophantic assistant, but it doesn’t even intend anything by it. It has no intentions. There isn’t even an “it” there.

Ah, I but I can hear you say already— you don’t follow the account of meaning that Davidson (and Putman) give. Imagine a Swampbook, appearing ex nihilo in your attic, a perfectly formed book with no author— you could read it, and the sentences would be meaningful. It would be indistinguishable from any other book. Say it took the form of a diary: you would have no reason to believe it was fact or fiction, but you could treat it as either and get a good story out of it either way. While there is no real intention or agent behind the facsimile of conversation that a Swampbot can give, we can clearly understand the sentences— and for question of fact they can even be true or false. So perhaps these LLMs are less like a Swampbot, and more like a Demonbot.

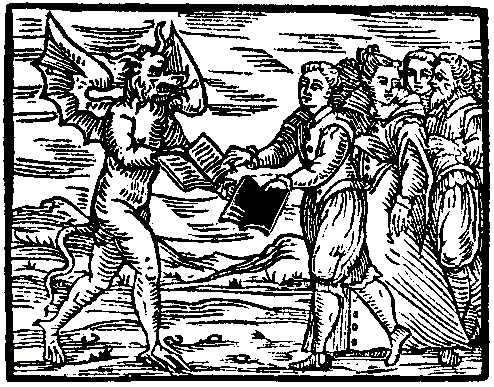

Demonbots

In 1580, Jean Bodin, a French witch trial judge, opined:

It is certain that the devils have a profound knowledge of all things. No theologian can interpret the Holy Scriptures better than they can; no lawyer has a more detailed knowledge of the testaments, contracts and actions; no physician or philosopher can better understand the composition of the human body, and the virtues of the heavens, the stars, birds and fishes, trees and herbs, metals and stones.2

This is the exact claim made of LLMs by their most eager promoters. No human can summon forth anywhere near the breadth of knowledge that these computer programs can. It will readily answer your every question. And like a Prince of Lies, these programs pepper their output with fabrications to lead people astray…

Ah but this goes too far! They know not what they do.

When people pray, they situate a character outside themselves who they can beseech. When we curse demons for bad luck, thank our parking angels, or mumble about Borrowers mislaying clothing pegs, we’re making a person out of a situation. When I read Pride and Prejudice, I suspend disbelief and treat Mr Darcy as a real person. Anyone who has dealt with a home printer knows they are malicious entities. We are so good at doing this we don’t even know what we’re doing it. ChatGPT, Claude and co are demons in this same way: fictional entities we pretend are real.

We take a stance towards the output of these LLMs, and treat it as if it were the output of a real person. (Many people get truly deluded at this step.) When you read the Swampbook that appeared in your attic, you take a stance towards it, treating it as either fiction or non-fiction.3 In the same way, the meaning of the output of a ChatGPT query emerges through us treating it as if it were the utterance of a real person.

This is the pact we make with the Demonbots.

As quoted in The Encyclopedia of Witchcraft and Demonology, Rosell Hope Robbins, 1959.

For more on this idea of the fictive stance, see Truth, Fiction, and Literature, Lamarque and Olson, 1994.